-

About Trey

Trey is the Founder @ Searchkernel, Author of AI-Powered Search and Solr in Action, former CTO @ Presearch, former Chief Algorithms Officer and SVP of Engineering @ Lucidworks, Advisor to the Southern Data Science Conference, and Investor in multiple startups (primarily tech companies focusing on blockchain, artificial intelligence, healthcare, and education).

He is a frequent Public Speaker on Search and Data Science at numerous conferences around the world. He is also a Frequently Published Researcher, with years of research papers and journal articles across the spectrum of search & information retrieval, recommendation systems, data science, and analytics.

-

Career

Executive, Technical Founder, Author, Speaker, Investor, Advisor

Founder - Searchkernel

May 2020-Present

Building the next generation of Search.

Chief Technology Officer - Presearch

June 2020-June 2023

Building a web-scale, decentralized search engine, powered by the community.

Chief Algorithms Officer - Lucidworks

December 2018-May 2020

Driving vision and practical application of intelligent data science algorithms to power relevant search experiences for hundreds of the worlds biggest and brightest organizations. Primarily focus on R&D, publication, conference presentations, and working with key customers optimize the intelligence of their search applications.

Advisor - Southern Data Science Conference

December 2016-Present

Member of the Advisory Board for the Southern Data Science Conference, the preeminent datascience conference across the south-eastern united states.

Advisor - Presearch

June 2017-June 2020

Building a web-scale, decentralized search engine, powered by the community. Advises on blockchain ecosystem, search technology, open source software strategy, running distributed software teams, and building sustainable open source communities.

SVP of Engineering - Lucidworks

February 2016-November 2018

Growing and empowering a globally-distributed engineering team to build the best in open source (Apache Solr) and commercial (Lucidworks Fusion) search technologies used to power search for many of the best and brightest organizations in the world.

Director of Engineering, Search & Recommendations

January 2014-February 2016

I led the engineering department that develops CareerBuilder's global search platform. This primarily includes building data science and software engineering teams that can evolve our scalable search platform, our recommendation engine algorithms, and our Big Data analytics products. We specialized in employment search - job search and candidate search - building search and recommendation systems to match the right person with the right job.

Founder and Chief Engineer - Celiaccess

2009-2019

Founded and built the world's first Gluten-free search engine. Included development of a gluten-free barcode scanner ("Gluten-free Scanner") and a gluten-free restaurant locator ("Gluten-free Near Me"). Company was profitable within one year, and ultimately achieved over 100,000 app downloads and several million searches per year.

Various Engineering / Leadership Roles

July 2007-January 2014

Various prior roles held at CareerBuilder include:

- Director of Engineering, Search & Analytics

- Search Technology Development Manager

- Search Technology Development Team Lead

- Software Engineer II

- Software Engineer I

For more details, see Linkedin Profile.

-

Books

A selection of books for which I've been an author or contributor

AI-Powered Search

The guide to building continuously-learning search engines

Trey Grainger, Doug Turnbull, & Max Irwin, 2024 (Summer).

Great search is all about delivering the right results. Today’s search engines are expected to be smart, understanding the nuances of natural language queries, as well as each user’s preferences and context. AI-Powered Search teaches you the latest machine learning techniques to create search engines that continuously learn from your users and your content, to drive more domain-aware and intelligent search.

Solr in Action

The best-selling book on the Apache Solr open source search engine

Trey Grainger & Timothy Potter, 2014.

Solr in Action is a comprehensive guide to implementing scalable search using Apache Solr. This clearly written book walks you through well-documented examples ranging from basic keyword searching to scaling a system for billions of documents and queries. It will give you a deep understanding of how to implement core Solr capabilities.

Utilizing Big Data Analytics for Automatic Building of Language-agnostic Semantic Knowledge Bases

Concepts, Technologies and Applications

Wrote the final chapter of the book Distributed Computing in Big Data Analytics, Springer, 2017.

Authors: Khalifeh Al Jadda, Mohammed Korayem, and Trey Grainger.

Relevant Search

The ultimate guide to search relevancy engineering

Doug Turnbull & John Berryman, 2016. Forward by Trey Grainger.

I contributed the forward for Relevant Search, the excellent book by Doug Turnbull and John Berryman, covering how to think about relevance engineering and build a highly-relevant search system.

-

Education

Stanford University

Information Retrieval and Web Search

4.0 GPA

2014

Pursued Master's-level study at Stanford university, studying Information Retrieval & Web Search for university credit under Professor Chris Manning.

Georgia Tech

Executive MBA: Management of Technology

4.0 GPA

2012

Studied business, management of technology, and innovation. Built a viable startup business plan and won first place in the school's business plan competition.

Furman University

Bachelors: Computer Science, Business, Philosophy

Magna Cum Laude

2007

Graduated with majors from the Computer Science department, Business & Management department, and Philosophy Department.

Videos

A selection of conference and meetup presentations I've delivered

Relevance in the Age of Generative Search

Presented at Southern Data Science Conference 2023 in Atlanta, GA.

AMA with the Authors of AI-Powered Search

Presented at Haystack US 2023

Keynote: Relevance in the Age of Generative Search

Presented at Haystack US 2023 in Charlottesville, VA.

Data Science for Web3: Building a Decentralized Web Search Engine

Presented at Southern Data Science Conference 2023

Applying User Signals like a Relevance Engineering Ninja

Presented at Haystack US 2021

Interpreting Intent with Semantic Knowledge Graphs

Presented at live@Manning Graph Data Science Conference

Ask Me Anything: AI-Powered Search

Presented at Berlin Buzzwords + Haystack 2020

Thought Vectors, Knowledge Graphs, and Curious Death(?) of Keyword Search

Presented at Berlin Buzzwords + Haystack EU 2020 in Berlin, Germany.

Searching for a Better Search

Guest on Finding Genius Podcast

Balancing the Dimensions of User Intent

Presented at Haystack EU 2019 in Berlin, Germany.

Reflected Intelligence: Real-world AI in Digital Transformation

Presented at Digital Transformation Conference (Boston 2019) in Boston, MA.

Thought Vectors and Knowledge Graphs in AI-powered Search

Presented at Boston Apache Lucene and Solr Meeting in Boston, MA.

The Next Generation of AI-powered Search

Presented at Activate 2019 in Washington, DC.

Natural Language Search with Knowledge Graphs

Presented at Activate 2019 in Washington, DC.

Natural Language Search with Knowledge Graphs

Presented at Haystack 2019 in Charlottesville, VA.

The Future of Search and AI

Closing Keynote at Activate 2018 in Montreal, Canada.

How to Build a Semantic Search System

Presented at Activate 2018 in Montreal, Canada.

Intent Algorithms: The Data Science of Smart Information Retrieval Systems

Presented at the Southern Data Science Conference 2017 in Atlanta.

The Apache Solr Smart Data Ecosystem

Presented at the DFW Data Science Meetup, January 2017 in Dallas, TX.

Reflected Intelligence: Lucene/Solr as a Self-Learning Data System

Presented at Lucene/Solr Revolution 2016 in Boston, MA.

Searching on Intent: Knowledge Graphs, Personalization, and Contextual Disambiguation

Presented at Bay Area Search Meetup, November 10, 2015.

Leveraging Lucene/Solr as a Knowledge Graph and Intent Engine

Presented at Lucene/Solr Revolution 2015 in Austin, TX.

Semantic & Multilingual Strategies in Lucene/Solr

Presented at Lucene/Solr Revolution 2014 in Washington, DC.

Scaling Recommendations, Semantic Search, & Data Analytics with Solr

Presented at Atlanta Solr Meetup, October 21, 2014 in Atlanta, GA.

Enhancing Relevancy through Personalization and Semantic Search

Presented at Lucene/Solr Revolution EU 2013 in Dublin, Ireland.

Building a Real-time, Solr-powered Recommendation Engine

Presented at Lucene/Solr Revolution 2012 in Boston, MA.

Extending Solr: Building a Cloud-like Knowledge Discovery Platform

Presented at Lucene Revolution 2011 in San Francisco, CA.

-

Slide Decks

Slides from public presentations I've given

from Haystack EU 2019

from Digital Transformation Conference (Boston 2019)

from Boston Apache Lucene/Solr Meetup (October 2019)

from Chicago Apache Lucene & Solr Meetup (September 2019)

Keynote from Activate 2019

from Activate 2019

from DOD & Federal Knowledge Management Symposium 2019

from Haystack 2019

from the Southern Data Science Conference 2019

from Activate 2018

from Activate 2018

from the Southern Data Science Conference, 2018

from the Haystack conference, 2018

from presentation to Furman University's Big Data: Mining & Analytics Class, December 1, 2017

from Lucene/Solr Revolution 2017

from Greenville Data Science Meetup, June 30, 2017

from the Southern Data Science Conference, 2017

from DFW Data Science Meetup, January 9, 2017

from South Big Data Hub Text Data Analysis Panel , December 8, 2016

from Lucene/Solr Revolution 2016

from presentation to Georgia Tech's Data & Visual Analytics Class, March 15, 2016

from Bay Area Search Meetup, November 10, 2015

from Lucene/Solr Revolution, 2015

from Lucene/Solr Revolution 2014

from IEEE Big Data 2014

from Atlanta Solr Meetup, October 21, 2014

from Lucene/Solr Revolution EU 2013

from Lucene/Solr Revolution 2013

from Lucene/Solr Revolution 2012

from Lucene/Solr Revolution 2011

-

Research Publications

Journal and Research Paper Publications

Fully Automated QA System for Large Scale Search and Recommendation Engines Leveraging Implicit User Feedback

WSDM, 2018

In this paper we introduce a fully-automated quality-assurance (QA) system for search and recommendation engines that does not require participation of end users in the process of evaluating any changes in the existing relevancy algorithms. Specifically, the proposed system doesn’t require any manual effort to assign relevancy scores to query/document pairs to generate a golden standard training set, and it also does not require exposing live users to new algorithms to heuristically measure their reactions. The proposed system has been used successfully on CareerBuilder’s web-scale search engine, where it has been demonstrated to accurately simulate nDCG scores offline for previously untested algorithms. In our tests, these nDCG scores map very closely to corresponding realworld improvements in click-through rates when subsequent A/B tests are performed with live user traffic. Such a system can be used to automatically simulate relevancy benchmarks for thousands of search algorithm variants offline before a single end user is ever exposed to a test.

Mining Massive Hierarchical Data Using a Scalable Probabilistic Graphical Model

Journal of Information Sciences (Elsevier), 2018

Probabilistic Graphical Models (PGM) are very useful in the fields of machine learning and data mining. The crucial limitation of those models, however, is their scalability. The Bayesian Network, which is one of the most common PGMs used in machine learning and data mining, demonstrates this limitation when the training data consists of random variables, in which each of them has a large set of possible values. In the big data era, one could expect new extensions to the existing PGMs to handle the massive amount of data produced these days by computers, sensors and other electronic devices. With hierarchical data - data that is arranged in a treelike structure with several levels - one may see hundreds of thousands or millions of values distributed over even just a small number of levels. When modeling this kind of hierarchical data across large data sets, unrestricted Bayesian Networks may become infeasible for representing the probability distributions. In this paper, we introduce an extension to Bayesian Networks that can handle massive sets of hierarchical data in a reasonable amount of time and space. The proposed model achieves high precision and high recall when used as a multi-label classifier for the annotation of mass spectrometry data. On another data set of 1.5 billion search logs provided by CareerBuilder.com, the model was able to predict latent semantic relationships among search keywords with high accuracy.

Combining content-based and collaborative filtering for job recommendation system: A cost-sensitive Statistical Relational Learning approach

Journal of Knowledge-based Systems (Elsevier), 2017

Recommendation systems usually involve exploiting the relations among known features and content that describe items (content-based filtering) or the overlap of similar users who interacted with or rated the target item (collaborative filtering). To combine these two filtering approaches, current model-based hybrid recommendation systems typically require extensive feature engineering to construct a user profile. Statistical Relational Learning (SRL) provides a straightforward way to combine the two approaches through its ability to directly represent the probabilistic dependencies among the attributes of related objects. However, due to the large scale of the data used in real world recommendation systems, little research exists on applying SRL models to hybrid recommendation systems, and essentially none of that research has been applied to real big-data-scale systems. In this paper, we proposed a way to adapt the state-of-the-art in SRL approaches to construct a real hybrid job recommendation system. Furthermore, in order to satisfy a common requirement in recommendation systems (i.e. that false positives are more undesirable and therefore should be penalized more harshly than false negatives), our approach can also allow tuning the trade-off between the precision and recall of the system in a principled way. Our experimental results demonstrate the efficiency of our proposed approach as well as its improved performance on recommendation precision.

Application of Statistical Relational Learning to Hybrid Recommendation Systems

STARAI, 2016

Recommendation systems usually involve exploiting the relations among known features and content that describe items (content-based filtering) or the overlap of similar users who interacted with or rated the target item (collaborative filtering). To combine these two filtering approaches, current model-based hybrid recommendation systems typically require extensive feature engineering to construct a user profile. Statistical Relational Learning (SRL) provides a straightforward way to combine the two approaches. However, due to the large scale of the data used in real world recommendation systems, little research exists on applying SRL models to hybrid recommendation systems, and essentially none of that research has been applied on real big-data-scale systems. In this paper, we proposed a way to adapt the state-of-the-art in SRL learning approaches to construct a real hybrid recommendation system. Furthermore, in order to satisfy a common requirement in recommendation systems (i.e. that false positives are more undesirable and therefore penalized more harshly than false negatives), our approach can also allow tuning the tradeoff between the precision and recall of the system in a principled way. Our experimental results demonstrate the efficiency of our proposed approach as well as its improved performance on recommendation precision

Entity Type Recognition using an Ensemble of Distributional Semantic Models to Enhance Query Understanding

IEEE COMPSAC, 2016

Recommendation systems usually involve exploiting the relations among known features and content that describe items (content-based filtering) or the overlap of similar users who interacted with or rated the target item (collaborative filtering). To combine these two filtering approaches, current model-based hybrid recommendation systems typically require extensive feature engineering to construct a user profile. Statistical Relational Learning (SRL) provides a straightforward way to combine the two approaches. However, due to the large scale of the data used in real world recommendation systems, little research exists on applying SRL models to hybrid recommendation systems, and essentially none of that research has been applied on real big-data-scale systems. In this paper, we proposed a way to adapt the state-of-the-art in SRL learning approaches to construct a real hybrid recommendation system. Furthermore, in order to satisfy a common requirement in recommendation systems (i.e. that false positives are more undesirable and therefore penalized more harshly than false negatives), our approach can also allow tuning the tradeoff between the precision and recall of the system in a principled way. Our experimental results demonstrate the efficiency of our proposed approach as well as its improved performance on recommendation precision

Macro-optimization of email recommendation response rates harnessing individual activity levels and group affinity trends

IEEE ICMLA, 2016

Recommendation emails are among the best ways to re-engage with customers after they have left a website. While on-site recommendation systems focus on finding the most relevant items for a user at the moment (right item), email recommendations add two critical additional dimensions: who to send recommendations to (right person) and when to send them (right time). It is critical that a recommendation email system not send too many emails to too many users in too short of a time window, as users may unsubscribe from future emails or become desensitized and ignore future emails if they receive too many. Also, email service providers may mark such emails as spam if too many of their users are contacted in a short time-window. Optimizing email recommendation systems such that they can yield a maximum response rate for a minimum number of email sends is thus critical for the long-term performance of such a system. In this paper, we present a novel recommendation email system that not only generates recommendations, but which also leverages a combination of individual user activity data, as well as the behavior of the group to which they belong, in order to determine each user’s likelihood to respond to any given set of recommendations within a given time period. In doing this, we have effectively created a meta-recommendation system which recommends sets of recommendations in order to optimize the aggregate response rate of the entire system. The proposed technique has been applied successfully within CareerBuilder’s job recommendation email system to generate a 50% increase in total conversions while also decreasing sent emails by 72%.

The Semantic Knowledge Graph: A compact, auto-generated model for real-time traversal and ranking of any relationship within a domain

IEEE DSAA, 2016

This paper describes a new kind of knowledge representation and mining system which we are calling the Semantic Knowledge Graph. At its heart, the Semantic Knowledge Graph leverages an inverted index, along with a complementary uninverted index, to represent nodes (terms) and edges (the documents within intersecting postings lists for multiple terms/nodes). This provides a layer of indirection between each pair of nodes and their corresponding edge, enabling edges to materialize dynamically from underlying corpus statistics. As a result, any combination of nodes can have edges to any other nodes materialize and be scored to reveal latent relationships between the nodes. This provides numerous benefits: the knowledge graph can be built automatically from a real-world corpus of data, new nodes - along with their combined edges - can be instantly materialized from any arbitrary combination of preexisting nodes (using set operations), and a full model of the semantic relationships between all entities within a domain can be represented and dynamically traversed using a highly compact representation of the graph. Such a system has widespread applications in areas as diverse as knowledge modeling and reasoning, natural language processing, anomaly detection, data cleansing, semantic search, analytics, data classification, root cause analysis, and recommendations systems. The main contribution of this paper is the introduction of a novel system - the Semantic Knowledge Graph - which is able to dynamically discover and score interesting relationships between any arbitrary combination of entities (words, phrases, or extracted concepts) through dynamically materializing nodes and edges from a compact graphical representation built automatically from a corpus of data representative of a knowledge domain.

Improving the Quality of Semantic Relationships Extracted from Massive User Behavioral Data

IEEE Big Data, 2015

As the ability to store and process massive amounts of user behavioral data increases, new approaches continue to arise for leveraging the wisdom of the crowds to gain insights that were previously very challenging to discover by text mining alone. For example, through collaborative filtering, we can learn previously hidden relationships between items based upon users' interactions with them, and we can also perform ontology mining to learn which keywords are semantically-related to other keywords based upon how they are used together by similar users as recorded in search engine query logs. The biggest challenge to this collaborative filtering approach is the variety of noise and outliers present in the underlying user behavioral data. In this paper we propose a novel approach to improve the quality of semantic relationships extracted from user behavioral data. Our approach utilizes millions of documents indexed into an inverted index in order to detect and remove noise and outliers.

Query Sense Disambiguation Leveraging Large Scale User Behavioral Data

IEEE Big Data, 2015

Term ambiguity - the challenge of having multiple potential meanings for a keyword or phrase - can be a major problem for search engines. Contextual information is essential for word sense disambiguation, but search queries are often limited to very few keywords, making the available textual context needed for disambiguation minimal or non-existent. In this paper we propose a novel system to identify and resolve term ambiguity in search queries using large-scale user behavioral data. The proposed system demonstrates that, despite the lack of context in most keyword queries, multiple potential senses of a keyword or phrase within a search query can be accurately identified, disambiguated, and expressed in order to maximize the likelihood of fulfilling a user’s information need. The proposed system overcomes the immediate lack of context by leveraging large-scale user behavioral data from historical query logs. Unlike traditional word sense disambiguation methods that rely on knowledge sources or available textual corpora, our system is language-agnostic, is able to easily handle domain-specific terms and meanings, and is automatically generated so that it does not grow out of date or require manual updating as ambiguous terms emerge or undergo a shift in meaning. The system has been implemented using the Hadoop eco-system and integrated within CareerBuilder’s semantic search engine.

Augmenting Recommendation Systems Using a Model of Semantically-related Terms Extracted from User Behavior

ACM RecSys, 2014

Common difficulties like the cold-start problem and a lack of sufficient information about users due to their limited interactions have been major challenges for most recommender systems (RS). To overcome these challenges and many similar ones that result in low accuracy (precision and recall) recommendations, we propose a novel system that extracts semantically-related search keywords based on the aggregate behavioral data of many users. These semantically-related search keywords can be used to substantially increase the amount of knowledge about a speciffic user's interests based upon even a few searches and thus improve the accuracy of the RS. The proposed system is capable of mining aggregate user search logs to discover semantic relationships between key phrases in a manner that is language agnostic, human understandable, and virtually noise-free. These semantically related keywords are obtained by looking at the links between queries of similar users which, we believe, represent a largely untapped source for discovering latent semantic relationships between search terms.

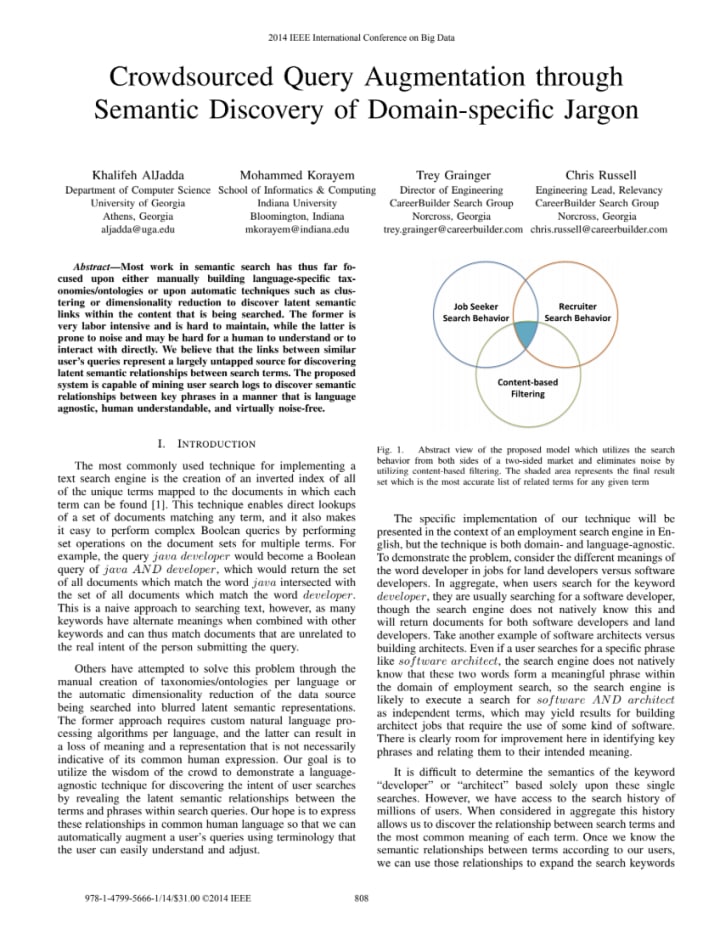

Crowdsourced Query Augmentation through Semantic Discovery of Domain-specific Jargon

IEEE Big Data, 2014

Most work in semantic search has thus far focused upon either manually building language-specific taxonomies/ontologies or upon automatic techniques such as clustering or dimensionality reduction to discover latent semantic links within the content that is being searched. The former is very labor intensive and is hard to maintain, while the latter is prone to noise and may be hard for a human to understand or to interact with directly. We believe that the links between similar user’s queries represent a largely untapped source for discovering latent semantic relationships between search terms. The proposed system is capable of mining user search logs to discover semantic relationships between key phrases in a manner that is language agnostic, human understandable, and virtually noise-free.

PGMHD: A Scalable Probabilistic Graphical Model for Massive Hierarchical Data Problems

IEEE Big Data, 2014

In the big data era, scalability has become a crucial requirement for any useful computational model. Probabilistic graphical models are very useful for mining and discovering data insights, but they are not scalable enough to be suitable for big data problems. Bayesian Networks particularly demonstrate this limitation when their data is represented using few random variables while each random variable has a massive set of values.

With hierarchical data - data that is arranged in a treelike structure with several levels - one would expect to see hundreds of thousands or millions of values distributed over even just a small number of levels. When modeling this kind of hierarchical data across large data sets, Bayesian networks become infeasible for representing the probability distributions for the following reasons: i) Each level represents a single random variable with hundreds of thousands of values, ii) The number of levels is usually small, so there are also few random variables, and iii) The structure of the network is predefined since the dependency is modeled top-down from each parent to each of its child nodes, so the network would contain a single linear path for the random variables from each parent to each child node. In this paper we present a scalable probabilistic graphical model to overcome these limitations for massive hierarchical data. We believe the proposed model will lead to an easily-scalable, more readable, and expressive implementation for problems that require probabilistic-based solutions for massive amounts of hierarchical data. We successfully applied this model to solve two different challenging probabilistic-based problems on massive hierarchical data sets for different domains, namely, bioinformatics and latent semantic discovery over search logs. -

Get in Touch

Please use this form to reach out so we can collaborate!